mn create-function-app example.micronaut.micronautguide --features=graalvm,aws-lambda --build=maven --lang=kotlinDeploy a Micronaut function as a GraalVM Native Executable to AWS Lambda

Learn how to distribute a Micronaut function built as a GraalVM Native executable to AWS Lambda Custom Runtime

Authors: Sergio del Amo

Micronaut Version: 4.10.9

1. Introduction

Please read about Micronaut AWS Lambda Support to learn more about different Lambda runtime, Triggers, and Handlers, and how to integrate with a Micronaut application.

The biggest problem with Java applications and Lambda is how to mitigate Cold startups. Executing GraalVM Native executables of a Micronaut function in a Lambda Custom runtime is a solution to this problem.

If you want to respond to triggers such as queue events, S3 events, or single endpoints, you should opt to code your Micronaut functions as Serverless functions.

2. Getting Started

In this guide, we will deploy a Micronaut function written in Kotlin as a GraalVM Native executable to an AWS Lambda custom runtime.

3. What you will need

To complete this guide, you will need the following:

-

Some time on your hands

-

A decent text editor or IDE (e.g. IntelliJ IDEA)

-

JDK 21 or greater installed with

JAVA_HOMEconfigured appropriately

4. Solution

We recommend that you follow the instructions in the next sections and create the application step by step. However, you can go right to the completed example.

-

Download and unzip the source

5. Writing the Application

Create an application using the Micronaut Command Line Interface or with Micronaut Launch.

If you don’t specify the --build argument, Gradle with the Kotlin DSL is used as the build tool. If you don’t specify the --lang argument, Java is used as the language.If you don’t specify the --test argument, JUnit is used for Java and Kotlin, and Spock is used for Groovy.

|

The previous command creates a Micronaut application with the default package example.micronaut in a directory named micronautguide.

If you use Micronaut Launch, select Serverless function as application type and add the graalvm and aws-lambda features.

|

6. Code

The generated project contains sample code. Let’s explore it.

The application contains a class extending MicronautRequestHandler

package example.micronaut

import io.micronaut.function.aws.MicronautRequestHandler

import java.io.IOException;

import java.nio.charset.StandardCharsets;

import io.micronaut.json.JsonMapper;

import com.amazonaws.services.lambda.runtime.events.APIGatewayProxyRequestEvent

import com.amazonaws.services.lambda.runtime.events.APIGatewayProxyResponseEvent

import jakarta.inject.Inject

class FunctionRequestHandler : MicronautRequestHandler<APIGatewayProxyRequestEvent, APIGatewayProxyResponseEvent>() {

@Inject

lateinit var objectMapper: JsonMapper

override fun execute(input: APIGatewayProxyRequestEvent): APIGatewayProxyResponseEvent {

val response = APIGatewayProxyResponseEvent()

try {

val json = String(objectMapper.writeValueAsBytes(mapOf("message" to "Hello World")))

response.statusCode = 200

response.body = json

} catch (e: IOException) {

response.statusCode = 500

}

return response

}

}-

The class extends MicronautRequestHandler and defines input and output types.

The generated test shows how to verify the function behaviour:

package example.micronaut

import com.amazonaws.services.lambda.runtime.events.APIGatewayProxyRequestEvent

import org.junit.jupiter.api.Assertions.assertEquals

import org.junit.jupiter.api.Test

class FunctionRequestHandlerTest {

@Test

fun testHandler() {

val handler = FunctionRequestHandler()

val request = APIGatewayProxyRequestEvent()

request.httpMethod = "GET"

request.path = "/"

val response = handler.execute(request)

assertEquals(200, response.statusCode.toInt())

assertEquals("{\"message\":\"Hello World\"}", response.body)

handler.close()

}

}-

When you instantiate the Handler, the application context starts.

-

Remember to close your application context when you end your test. You can use your handler to obtain it.

-

Invoke the

executemethod of the handler.

7. Testing the Application

To run the tests:

./mvnw test8. Lambda

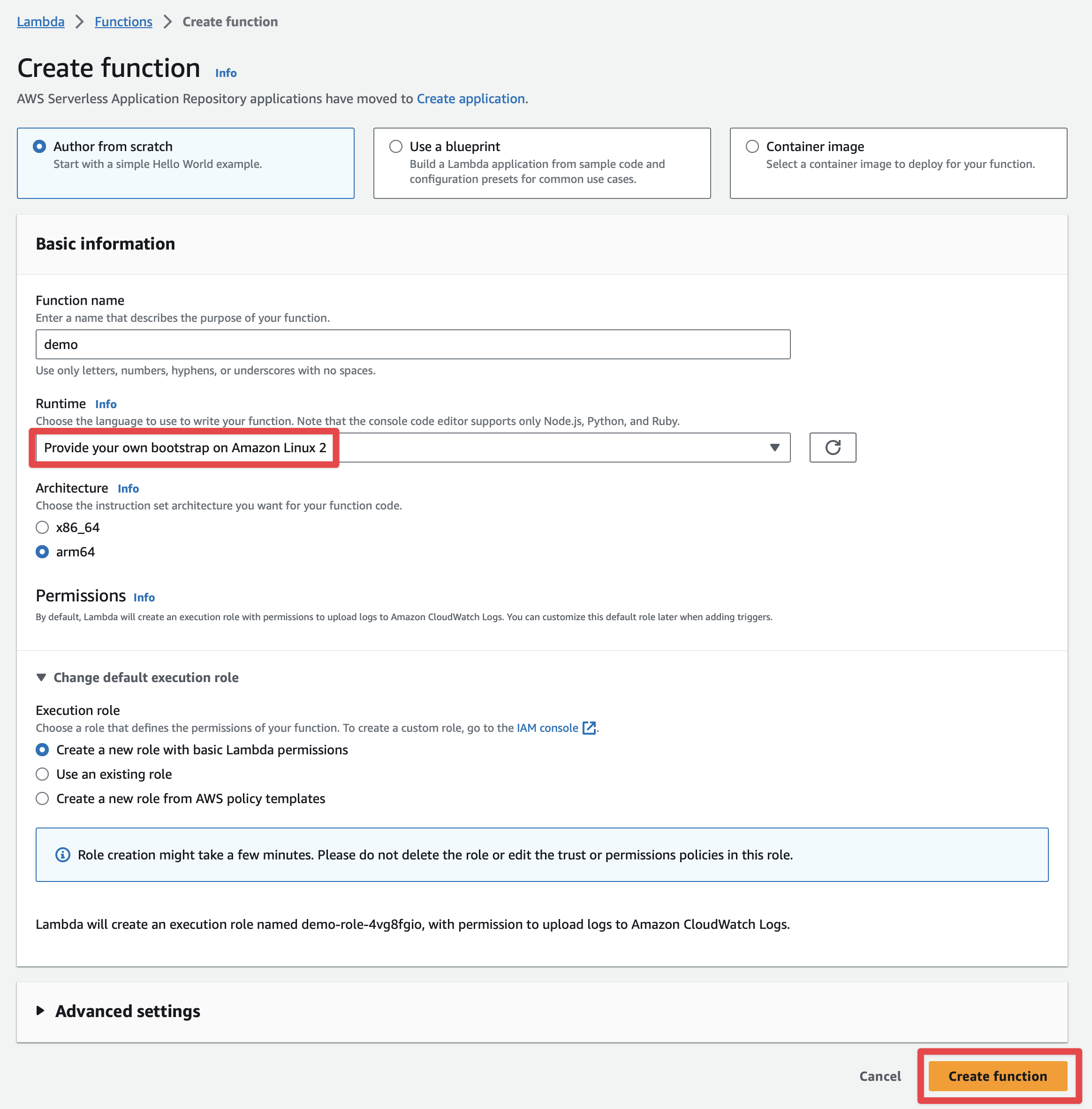

Create a Lambda Function. As a runtime, select Custom Runtime. Select the architecture x86_64 and arm64, which match the computer’s architecture, which you will use to build the GraalVM Native image.

The Micronaut framework eases the deployment of your functions as a Custom AWS Lambda runtime.

The main API you will interact with is AbstractMicronautLambdaRuntime. This is an abstract class which you can subclass to create your custom runtime mainClass. That class includes the code to perform the

Processing Tasks described in the Custom Runtime documentation.

8.1. Upload Code

The generated project contains such a class:

package example.micronaut

import com.amazonaws.services.lambda.runtime.RequestHandler

import com.amazonaws.services.lambda.runtime.events.APIGatewayProxyRequestEvent

import com.amazonaws.services.lambda.runtime.events.APIGatewayProxyResponseEvent

import io.micronaut.function.aws.runtime.AbstractMicronautLambdaRuntime

import java.net.MalformedURLException

class FunctionLambdaRuntime : AbstractMicronautLambdaRuntime<APIGatewayProxyRequestEvent, APIGatewayProxyResponseEvent, APIGatewayProxyRequestEvent, APIGatewayProxyResponseEvent>()

{

override fun createRequestHandler(vararg args: String?): RequestHandler<APIGatewayProxyRequestEvent, APIGatewayProxyResponseEvent> {

return FunctionRequestHandler()

}

companion object {

@JvmStatic

fun main(vararg args: String) {

try {

FunctionLambdaRuntime().run(*args)

} catch (e: MalformedURLException) {

e.printStackTrace()

}

}

}

}./mvnw package -Dpackaging=docker-native -Dmicronaut.runtime=lambda -PgraalvmThe above command generates a ZIP file which contains a GraalVM Native Executable of the application, and a bootstrap file which executes the native executable. The GraalVM Native Executable of the application is generated inside a Docker container.

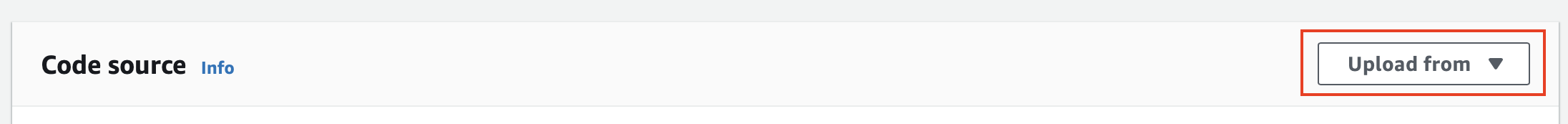

Once you have a ZIP file, upload it

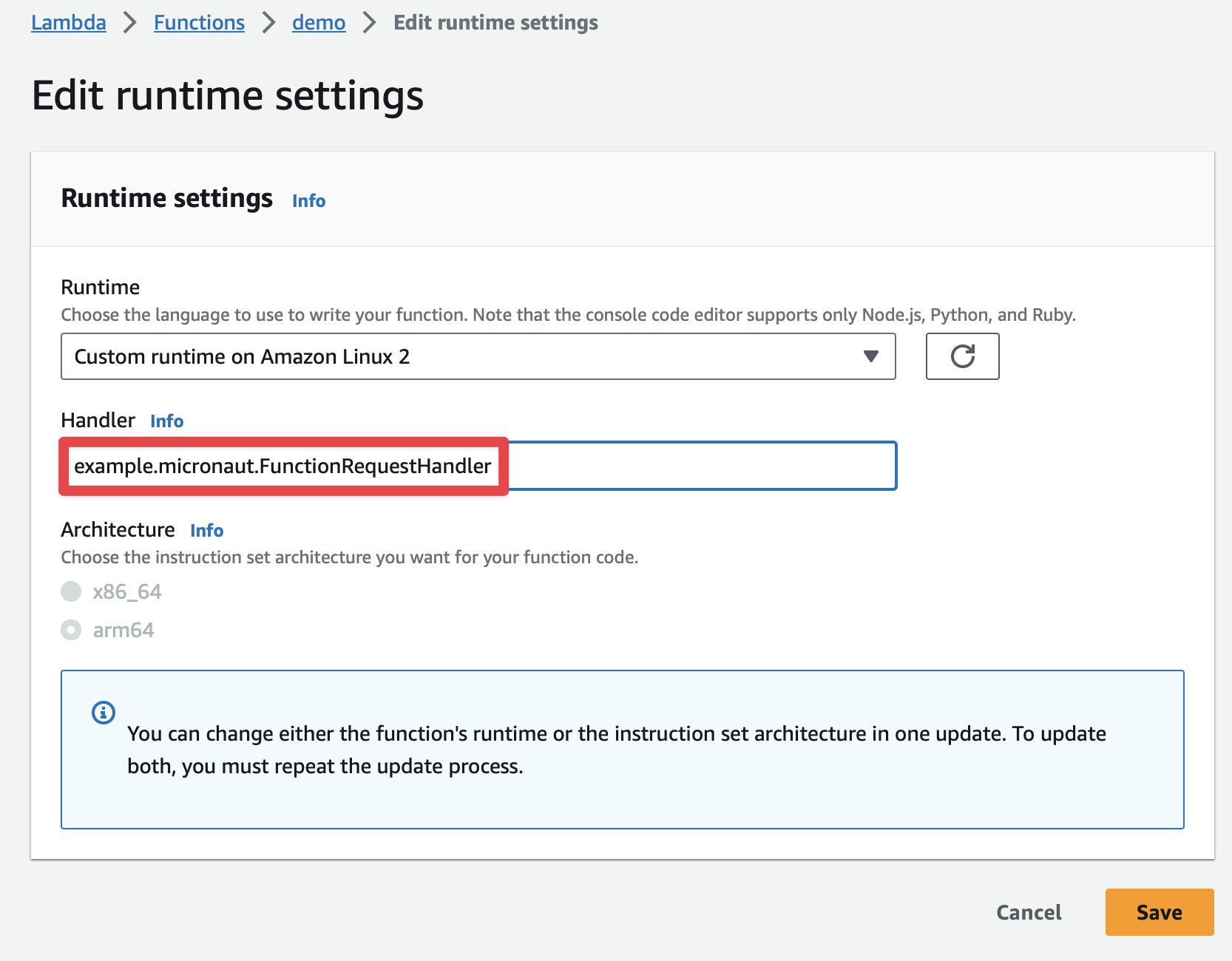

8.2. Handler

The handler used is the one created at FunctionLambdaRuntime.

Thus, you don’t need to specify the handler in the AWS Lambda console.

However, I like to specify it in the console as well:

example.micronaut.FunctionRequestHandler

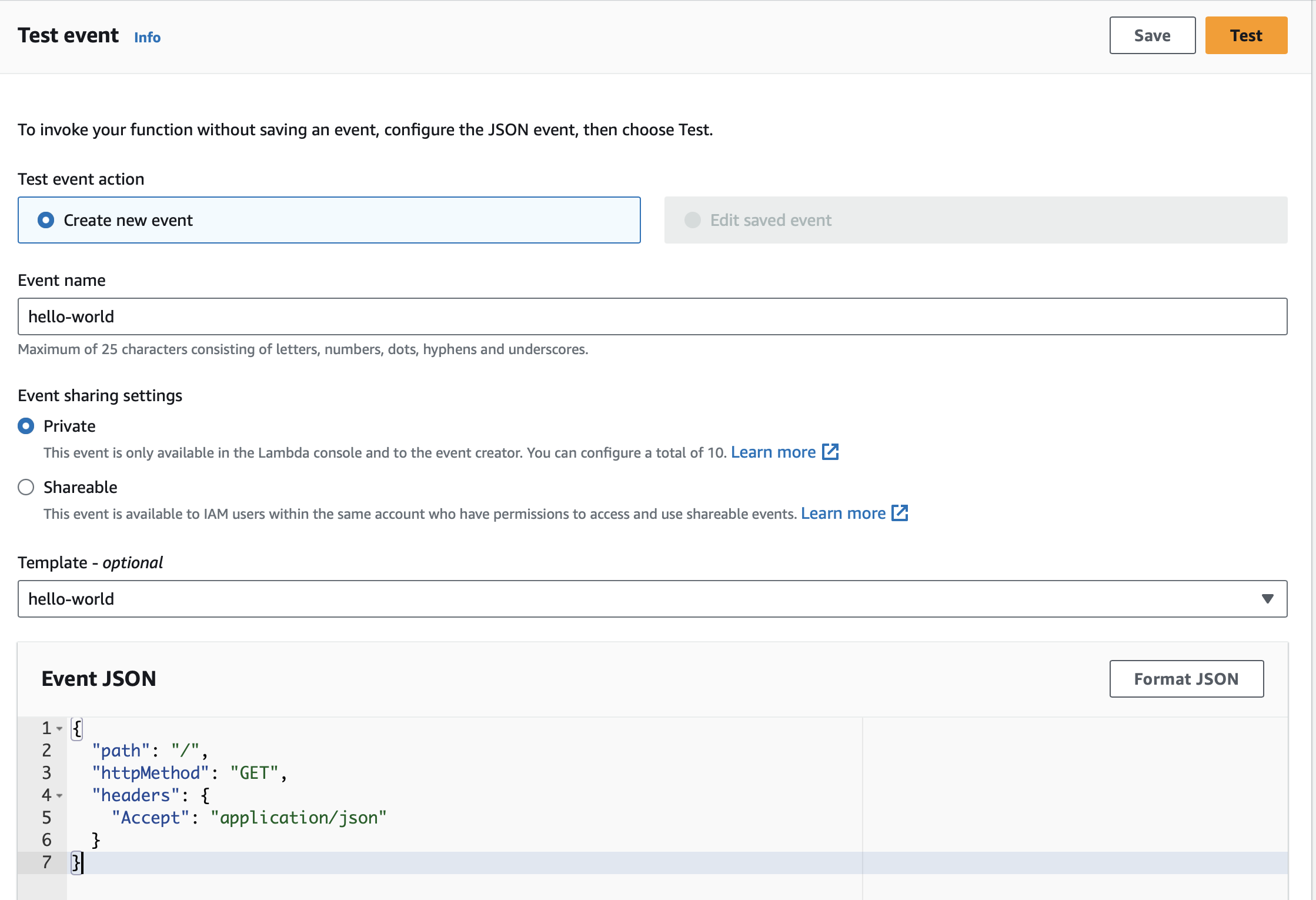

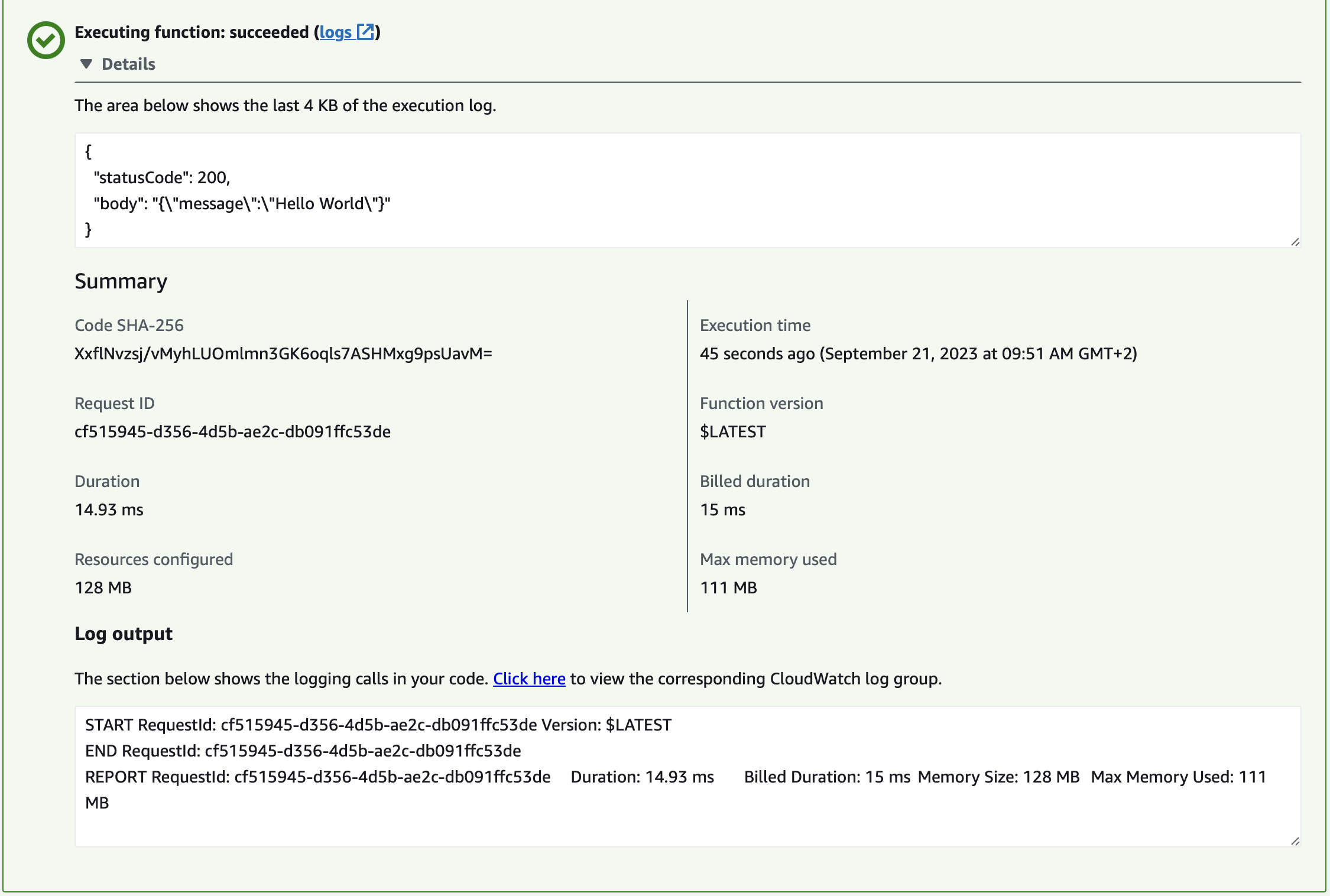

8.3. Test

You can test it easily.

{

"path": "/",

"httpMethod": "GET",

"headers": {

"Accept": "application/json"

}

}You should see a 200 response:

9. Next Steps

Explore more features with Micronaut Guides.

Read more about:

10. Help with the Micronaut Framework

The Micronaut Foundation sponsored the creation of this Guide. A variety of consulting and support services are available.

11. License

| All guides are released with an Apache license 2.0 license for the code and a Creative Commons Attribution 4.0 license for the writing and media (images…). |